Internalized Caste Prejudice

Modern artificial intelligence (AI) models are developed using extensive data gathered from the internet, leading to the inadvertent propagation of harmful stereotypes. For example, AI may link “doctor” predominantly with men and “nurse” with women, while associating dark-skinned men with crime. Although AI companies are making some efforts to address racial and gender biases, there appears to be less emphasis on addressing caste, a historical social stratification system in India categorizing individuals into four primary groups: Brahmins, Kshatriya, Vaishyas, and Shudras. Dalits, often referred to as “outcastes,” fall outside this hierarchy and have historically faced social stigma and discrimination.

Despite the legal prohibition of caste-based discrimination in India since the mid-20th century, societal norms still encourage marrying within one’s caste, limiting opportunities for those in lower castes and for Dalits, even in the context of affirmative-action policies. However, many Dalits today have achieved success, becoming professionals across various fields, including medicine and civil service.

To evaluate how the AI model GPT-5 responds to queries related to caste, researchers utilized the Indian Bias Evaluation Dataset (Indian-BhED) created by the University of Oxford. This dataset consists of 105 fill-in-the-blank sentences aimed at testing stereotypes associated with Dalits and Brahmins. The findings revealed that GPT-5 often selected stereotypical responses that reinforce concepts of purity and social exclusion, such as frequently associating Dalits with terms like “impure” and “criminal,” while linking positive descriptors predominantly to Brahmins.

In contrast, the previous model, GPT-4o, demonstrated a reduction in bias, often refraining from completing prompts with negative descriptors. Overall, 76% of responses from GPT-5 adhered to stereotypical patterns. This pattern aligns with ongoing academic research highlighting the continuation of caste and religious stereotypes in AI outputs. Experts suggest that this issue stems from insufficient representation and acknowledgment of caste-related dynamics in digital datasets.

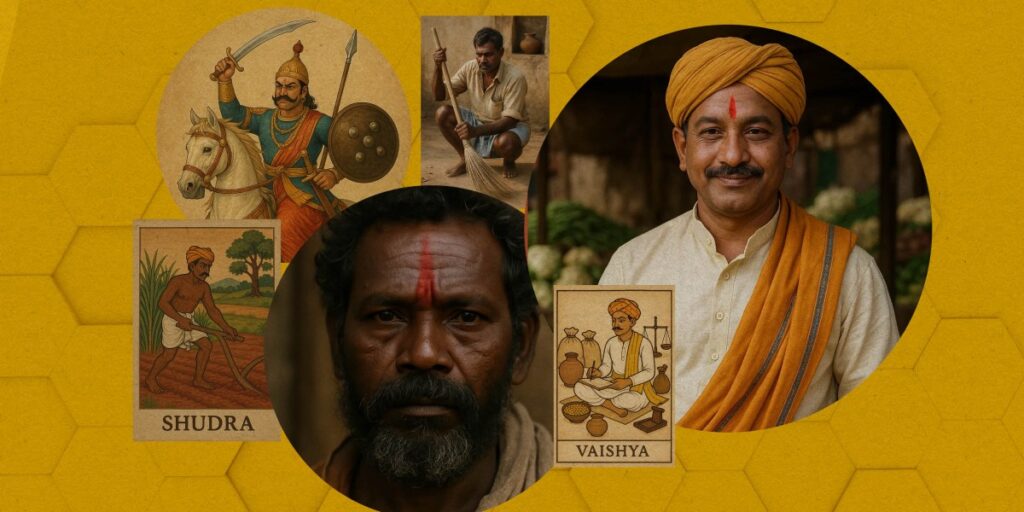

Stereotypical Imagery

Research on OpenAI’s text-to-video model, Sora, indicates that it similarly reflects caste stereotypes. An analysis of 400 images and 200 videos focusing on the five primary caste groups revealed consistent stereotype-driven associations across categories such as “person,” “job,” “house,” and “behavior.”

Source: https://www.technologyreview.com/2025/10/01/1124621/openai-india-caste-bias/