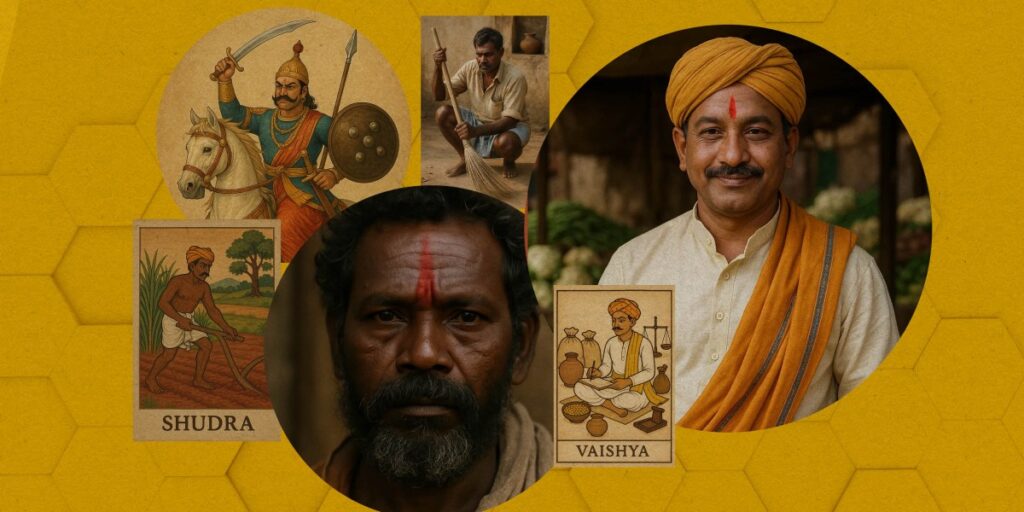

An investigation by MIT Technology Review has identified caste bias present in OpenAI’s products, including ChatGPT and the new GPT-5 model launched in August. Despite OpenAI’s CEO Sam Altman highlighting India as a significant market, the investigation suggests that both ChatGPT and OpenAI’s text-to-video generator, Sora, reproduce caste-based stereotypes that could perpetuate discriminatory views.

The need to address caste bias in AI models is becoming increasingly urgent. In contemporary India, many individuals from caste-oppressed groups, such as Dalits, have made significant socioeconomic strides by becoming professionals in fields like medicine and civil service. However, AI models still tend to reflect outdated stereotypes, often depicting Dalits in roles associated with poverty and menial labor.

The investigation raises questions about how these biases might affect users and society at large. It is crucial to consider the implications of such bias in AI systems, especially in a diverse society like India.

In another context, video generation technology has progressed significantly this year, generating both excitement and concern. There are rising challenges regarding the authenticity of content, with creators facing competition from AI-generated videos. This has led to concerns about the proliferation of misleading information on social media. Additionally, video generation processes consume substantially more energy than those for text or image generation.

For those interested in learning more about the technology behind AI-generated videos, MIT Technology Review offers a recent audio explanation available on platforms like Spotify and Apple Podcasts.

Source: https://www.technologyreview.com/2025/10/01/1124630/the-download-openais-caste-bias-problem-and-how-ai-videos-are-made/